AI Assistance

Assistant Teams

Introduction

Assistants are most powerful when they work together. Instead of asking a single assistant to research, code, backtest, and monitor a strategy all at once, you can assemble a team of specialists and let each one focus on the part of the workflow it was built for. The Conductor sits at the top of that team and keeps the work moving from one specialist to the next, so you never have to manually pass an idea between assistants yourself.

Why Work in Teams

The most practical reason for Assistant teams is context. Every assistant has a finite working memory, and the more it has to hold in mind at once (the research, the code, the backtest results, the live diagnostics), the slower and less reliable it becomes. Splitting the workflow across specialists means each assistant only carries the context it actually needs to do its part. The Research Assistant does not need to know how the Paper Testing Assistant instruments orders, and the Backtest Assistant does not need to remember every correlation matrix from the research stage. The handoff itself is the compression: a clean brief moves between specialists while the noise stays behind.

The second reason is expertise. A specialist that only does one thing can be tuned harder for that thing, resulting in better defaults, sharper instincts, fewer distractions. Five focused assistants outperform one generalist trying to remember which mode it is supposed to be in.

The third reason is parallelism. One generalist assistant can only work on one project at a time, leaving every other project waiting its turn. A team of specialists runs in parallel across the portfolio. The Ideas Assistant can draft tomorrow's research on one project while the Backtest Assistant compiles yesterday's idea on another and the Live Monitoring Assistant watches a third in production.

System Prompts

Every assistant on your team is shaped by a system prompt that defines what it does, how it does it, and where its responsibilities end. The prompt is what turns a general-purpose model into a Research Assistant, a Backtest Assistant, or a Live Monitoring Assistant. It sets the objective, names the tools the assistant is allowed to reach for, and lays out the conventions the assistant should follow when it writes code or reports results. You can use the assistants QuantConnect ships out of the box, customize the prompts to match the way your team already works, or write entirely new assistants when you have a workflow we have not covered. The prompt is the contract between you and the assistant. If you change the prompt, you change the behavior.

Assistant Chains and Mesh

There are two ways to wire a team of assistants together, and each one fits a different kind of work.

An Assistant Chain is a linear pipeline. Work moves from one specialist to the next in a defined order, with each assistant picking up where the previous one left off. For instance, the Research Assistant hands off to the Research Validation Assistant, which hands off to the Backtest Assistant, which hands off to the Paper Testing Assistant. Chains are easy to follow, easy to audit, and well suited to workflows where the stages have a natural sequence and the output of one stage is the input of the next.

An Assistant Mesh, sometimes called a Cluster, is more flexible. Instead of a fixed line, one assistant (usually the Conductor), keeps a roster of callable specialists and decides which ones to invoke based on what the request needs. The path through the team is decided in flight rather than baked into the wiring. This hub and spoke design fits work that is less linear by nature, where the hub treats its sub-assistants like tools it can call when needed.

How the Team Communicates

Assistants talk to each other through messages. Every conversation with an assistant lives in its own thread, so you can see exactly what was asked, what was answered, and what was handed off. When the Conductor routes work to a sub-assistant, it opens a conversation with that specialist and waits for results to come back, the same way a human team lead would email a teammate and then move on to other work.

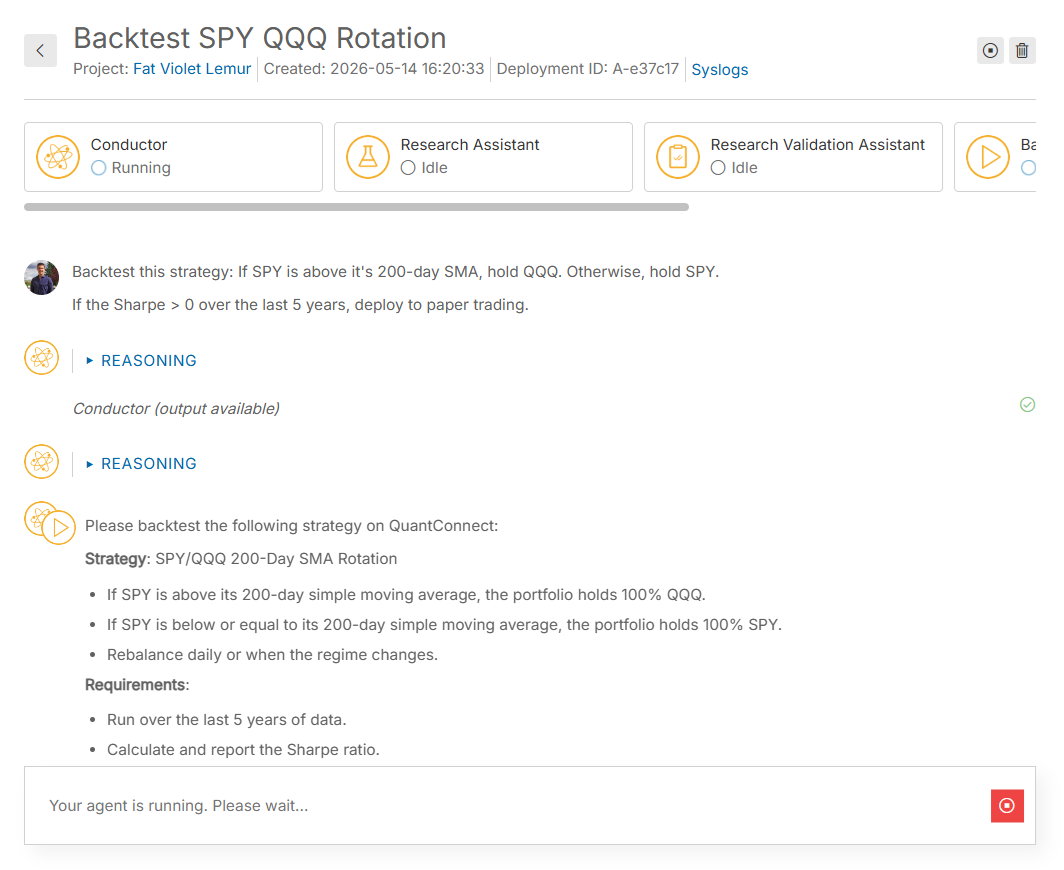

The following image shows an example of a deployment with Callable Assistants (an Assistant Mesh). The main page shows the conversation with the Conductor. To view the conversations of the sub-assistants, click one of the assistants at the top of the page. In this example, the Conductor called on the Backtest Assistant to generate the algorithm, then called on the Paper Testing Assistant to deploy the algorithm to paper trading once the backtest successfully completed.