Popular Libraries

XGBoost

Get Historical Data

Get some historical market data to train and test the model. For example, to get data for the SPY ETF during 2020 and 2021, run:

qb = QuantBook()

symbol = qb.add_equity("SPY", Resolution.DAILY).symbol

history = qb.history(symbol, datetime(2020, 1, 1), datetime(2022, 1, 1)).loc[symbol]

Prepare Data

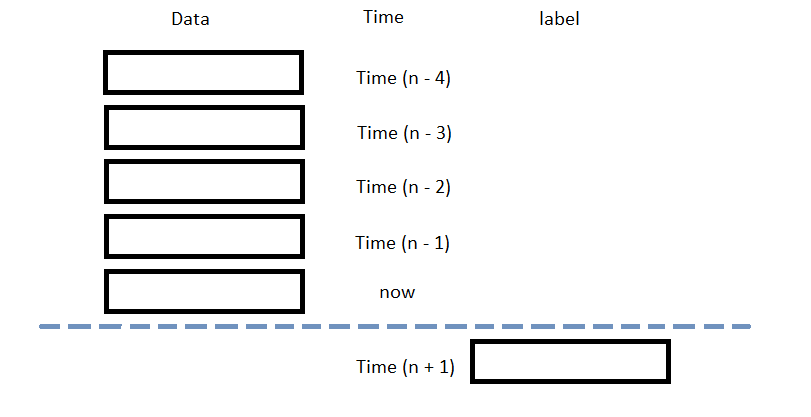

You need some historical data to prepare the data for the model. If you have historical data, manipulate it to train and test the model. In this example, use the following features and labels:

| Data Category | Description |

|---|---|

| Features | The last 5 closing prices |

| Labels | The following day's closing price |

The following image shows the time difference between the features and labels:

Follow these steps to prepare the data:

- Perform fractional differencing on the historical data.

- Loop through the

dfDataFrame and collect the features and labels. - Convert the lists of features and labels into

numpyarrays. - Standardize the features and labels

- Split the data into training and testing periods.

df = (history['close'] * 0.5 + history['close'].diff() * 0.5)[1:]

Fractional differencing helps make the data stationary yet retains the variance information.

n_steps = 5

features = []

labels = []

for i in range(len(df)-n_steps):

features.append(df.iloc[i:i+n_steps].values)

labels.append(df.iloc[i+n_steps])

features = np.array(features) labels = np.array(labels)

X = (features - features.mean()) / features.std() y = (labels - labels.mean()) / labels.std()

X_train, X_test, y_train, y_test = train_test_split(X, y)

Train Models

You need to prepare the historical data for training before you train the model. If you have prepared the data, build and train the model. In this example, train a gradient-boosted random forest for future price prediction. Follow these steps to create the model:

- Split the data for training and testing to evaluate the model.

- Format the training set into an XGBoost matrix.

- Train the model with parameters.

X_train, X_test, y_train, y_test = train_test_split(X, y)

dtrain = xgb.DMatrix(X_train, label=y_train)

params = {

'booster': 'gbtree',

'colsample_bynode': 0.8,

'learning_rate': 0.1,

'lambda': 0.1,

'max_depth': 5,

'num_parallel_tree': 100,

'objective': 'reg:squarederror',

'subsample': 0.8,

}

model = xgb.train(params, dtrain, num_boost_round=10)

Test Models

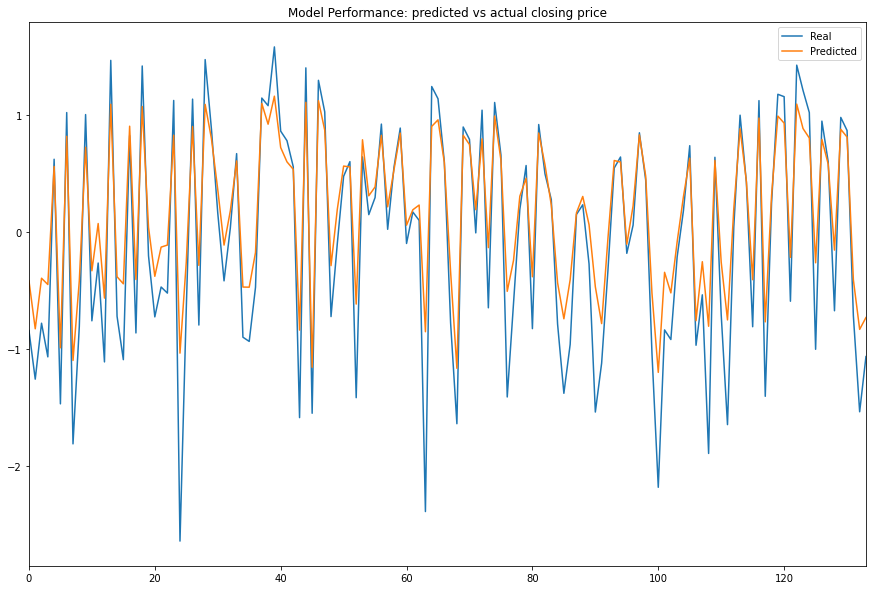

You need to build and train the model before you test its performance. If you have trained the model, test it on the out-of-sample data. Follow these steps to test the model:

- Format the testing set into an XGBoost matrix.

- Call the

predictmethod with the testing dataset. - Plot the predicted values against the actual values to assess the model's predictive power.

dtest = xgb.DMatrix(X_test, label=y_test)

y_predict = model.predict(dtest)

df = pd.DataFrame({'Real': y_test.flatten(), 'Predicted': y_predict.flatten()})

df.plot(title='Model Performance: predicted vs actual closing price', figsize=(15, 10))

plt.show()

Store Models

You can save and load xgboost models using the Object Store.

Save Models

Follow these steps to save models in the Object Store:

- Set the key name of the model to be stored in the Object Store.

- Call the

GetFilePathget_file_pathmethod with the key. - Call the

dumpmethod with the model and file path.

model_key = "model"

file_name = qb.object_store.get_file_path(model_key)

This method returns the file path where the model will be stored.

joblib.dump(model, file_name)

If you dump the model using the joblib module before you save the model, you don't need to retrain the model.

Load Models

You must save a model into the Object Store before you can load it from the Object Store. If you saved a model, follow these steps to load it:

- Call the

ContainsKeycontains_keymethod. - Call the

GetFilePathget_file_pathmethod with the key. - Call the

loadmethod with the file path.

qb.object_store.contains_key(model_key)

This method returns a boolean that represents if the model_key is in the Object Store. If the Object Store does not contain the model_key, save the model using the model_key before you proceed.

file_name = qb.object_store.get_file_path(model_key)

This method returns the path where the model is stored.

loaded_model = joblib.load(file_name)

This method returns the saved model.

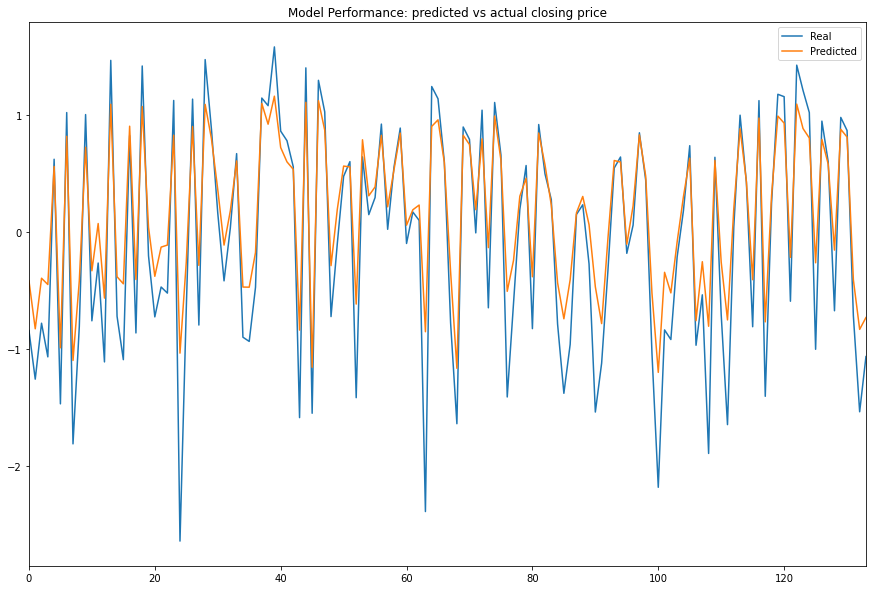

To verify loading the model was successful, test the model.

y_pred = loaded_model.predict(dtest)

df = pd.DataFrame({'Real': y_test.flatten(), 'Predicted': y_pred.flatten()})

df.plot(title='Model Performance: predicted vs actual closing price', figsize=(15, 10))

Examples

The following examples demonstrate some common practices for using the xgboost library.

Example 1: Predict Next Price

The following research notebook uses an xgboost machine learning model to predict the next day's close price by the previous 5 days' daily closes.

# Import the xgboost library and others.

import xgboost as xgb

from sklearn.model_selection import train_test_split

import joblib

# Instantiate the QuantBook for researching.

qb = QuantBook()

# Request the daily SPY history with the date range to be studied.

symbol = qb.add_equity("SPY", Resolution.DAILY).symbol

history = qb.history(symbol, datetime(2020, 1, 1), datetime(2022, 1, 1)).loc[symbol]

# Obtain the daily fractional differencing in close price to be the features and labels.

df = (history['close'] * 0.5 + history['close'].diff() * 0.5)[1:]

# Use the previous 5 day returns as the features.

# Get the 1-day forward return as the labels.

n_steps = 5

features = []

labels = []

for i in range(len(df)-n_steps):

features.append(df.iloc[i:i+n_steps].values)

labels.append(df.iloc[i+n_steps])

# Clean up and process the data for faster convergence.

features = np.array(features)

labels = np.array(labels)

X = (features - features.mean()) / features.std()

y = (labels - labels.mean()) / labels.std()

# Split the data into a training set and test set for validation.

X_train, X_test, y_train, y_test = train_test_split(X, y)

# Format the training set into an XGBoost matrix.

dtrain = xgb.DMatrix(X_train, label=y_train)

# Train the model with parameters.

params = {

'booster': 'gbtree',

'colsample_bynode': 0.8,

'learning_rate': 0.1,

'lambda': 0.1,

'max_depth': 5,

'num_parallel_tree': 100,

'objective': 'reg:squarederror',

'subsample': 0.8,

}

model = xgb.train(params, dtrain, num_boost_round=10)

# Format the testing set into an XGBoost matrix.

dtest = xgb.DMatrix(X_test, label=y_test)

# Call the predict method with the testing dataset.

y_predict = model.predict(dtest)

# Plot the predicted values against the actual values.

df = pd.DataFrame({'Real': y_test.flatten(), 'Predicted': y_predict.flatten()})

df.plot(title='Model Performance: predicted vs actual closing price', figsize=(15, 10))

plt.show()

# Store the model in the object store to allow accessing the model in the next research session or in the algorithm for trading.

model_key = "model"

file_name = qb.object_store.get_file_path(model_key)

joblib.dump(model, file_name)